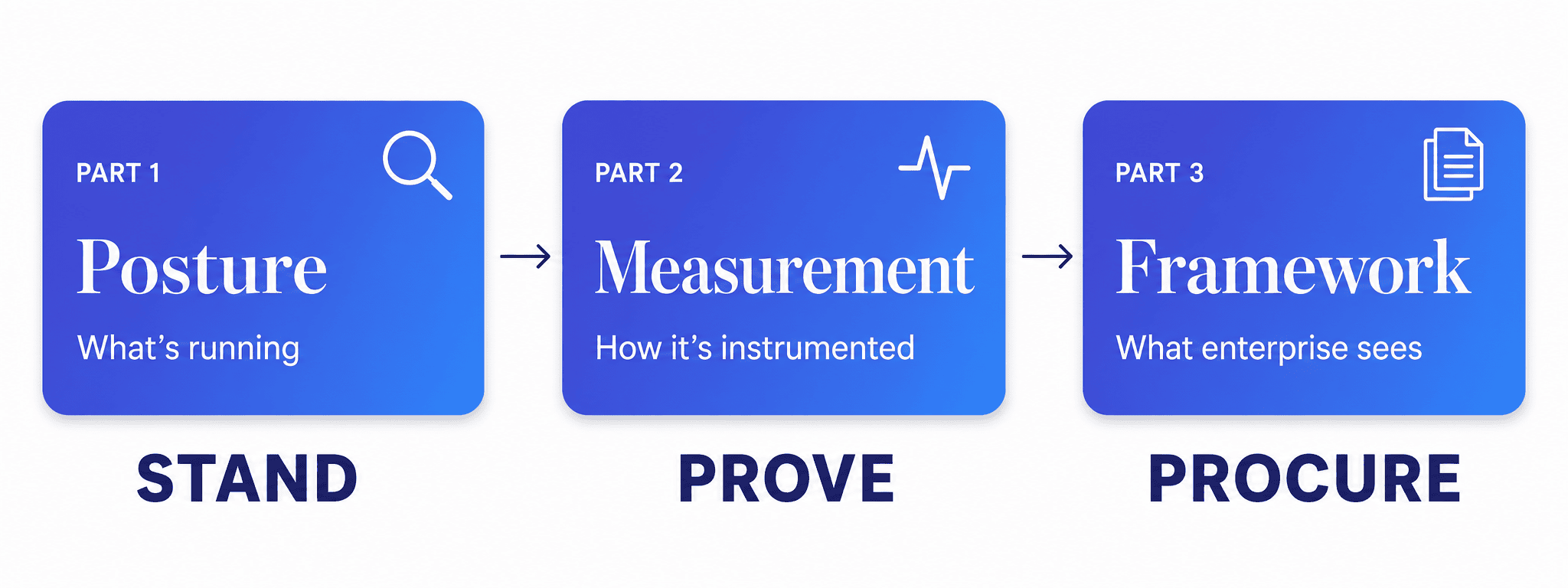

This is the third in a three-part series on how we approach AI governance with clients moving into enterprise markets. Part 1 covered the posture assessment. Part 2 covered building the measurement layer. This piece presents the full framework — and the method we use to build one.

Six weeks after we started, our client was back in the room with the same enterprise buyer who had stalled his last procurement conversation.

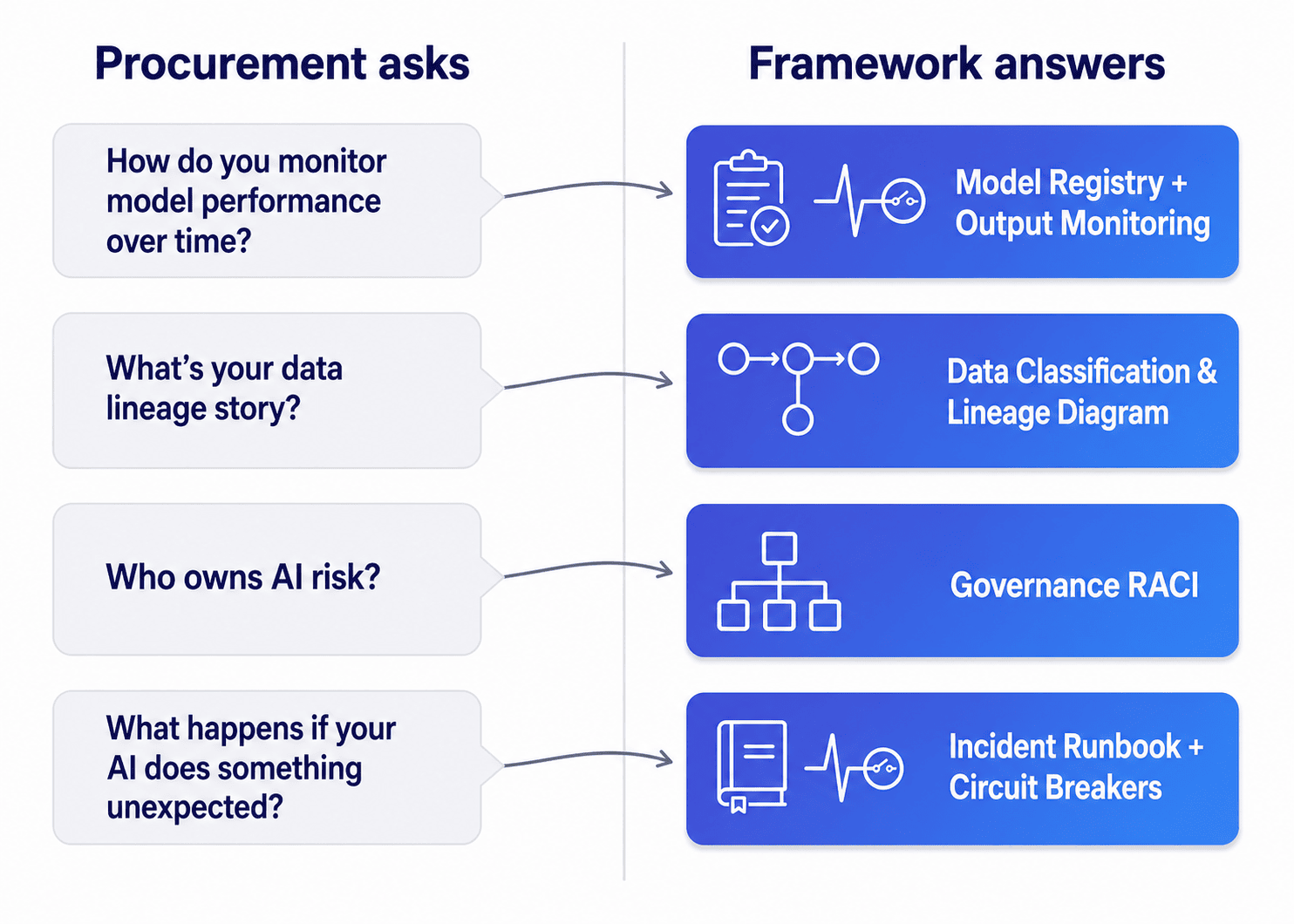

The questions came faster this time. How do you monitor model performance over time? What is your data lineage story? Who owns AI risk in your organization? What happens when one of your models behaves unexpectedly?

He had answers. More than that — he had artifacts. A model registry he could screen-share. A data classification map showing every input across every AI system. An incident response runbook with a named owner on the cover. A stakeholder impact diagram for each AI feature in the product. None of it was theatrical. All of it was current.

Halfway through the call, the buyer paused and said something none of us had expected. “This is more developed than what most of our internal teams have.”

That was the moment we knew the work had landed. Not because the framework was elaborate — it was not — but because every question that had blocked him three months earlier now had a one-page answer attached to it.

This is what an AI governance framework is for.

Why a framework, not a checklist?

The phrase AI governance framework gets used loosely. Most of the time it describes a policy document — principles, commitments, a committee structure — that lives in a Confluence page and rarely gets read. That is not what we mean by it, and it is not what enterprise procurement teams are actually looking for.

The framework we deliver at the close of an engagement is the artifact pack a buyer’s risk team can evaluate without ever speaking to your engineers. It is the deliverable that turns the posture assessment from Part 1 and the measurement layer from Part 2 into something portable. Something a CTO can hand to a procurement team, a regulator, an auditor, or a board, and have it speak for itself.

A checklist describes intentions. A framework gives a buyer something to point to.

The distinction matters because enterprise AI procurement now runs on documentation. The compliance and security teams reviewing your product are not going to interview your engineers. They are going to read what you give them. If what you give them is a five-page principles document, you have lost the conversation before it started. If what you give them is a structured pack of artifacts that answers their questions before they ask, you have changed what is being evaluated. You have moved the conversation from do we trust this vendor to how does this vendor’s governance system work — and the second question is one a well-built framework answers cleanly.

Responsible AI is the floor. A framework is the floor made legible to people who do not work for you.

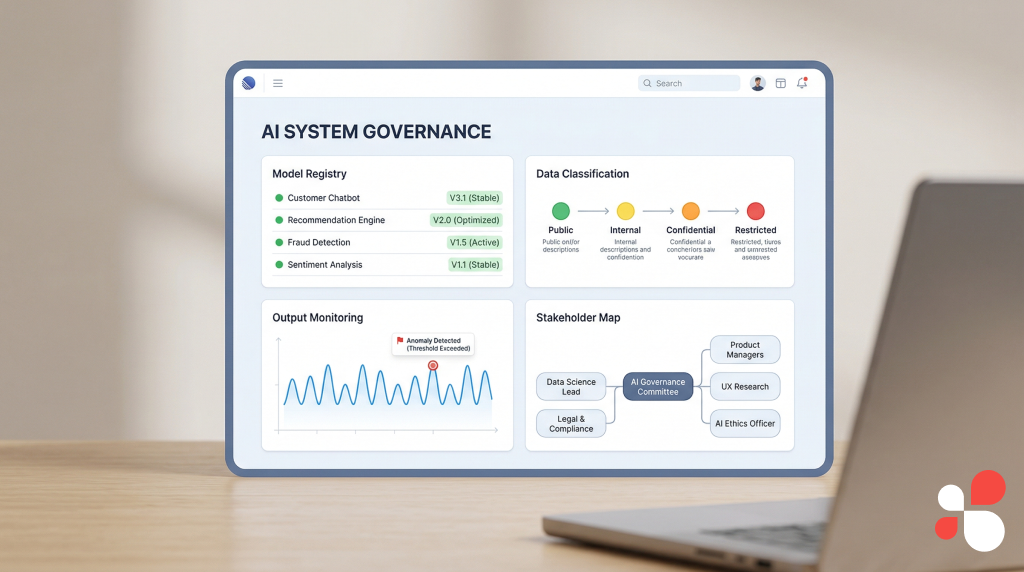

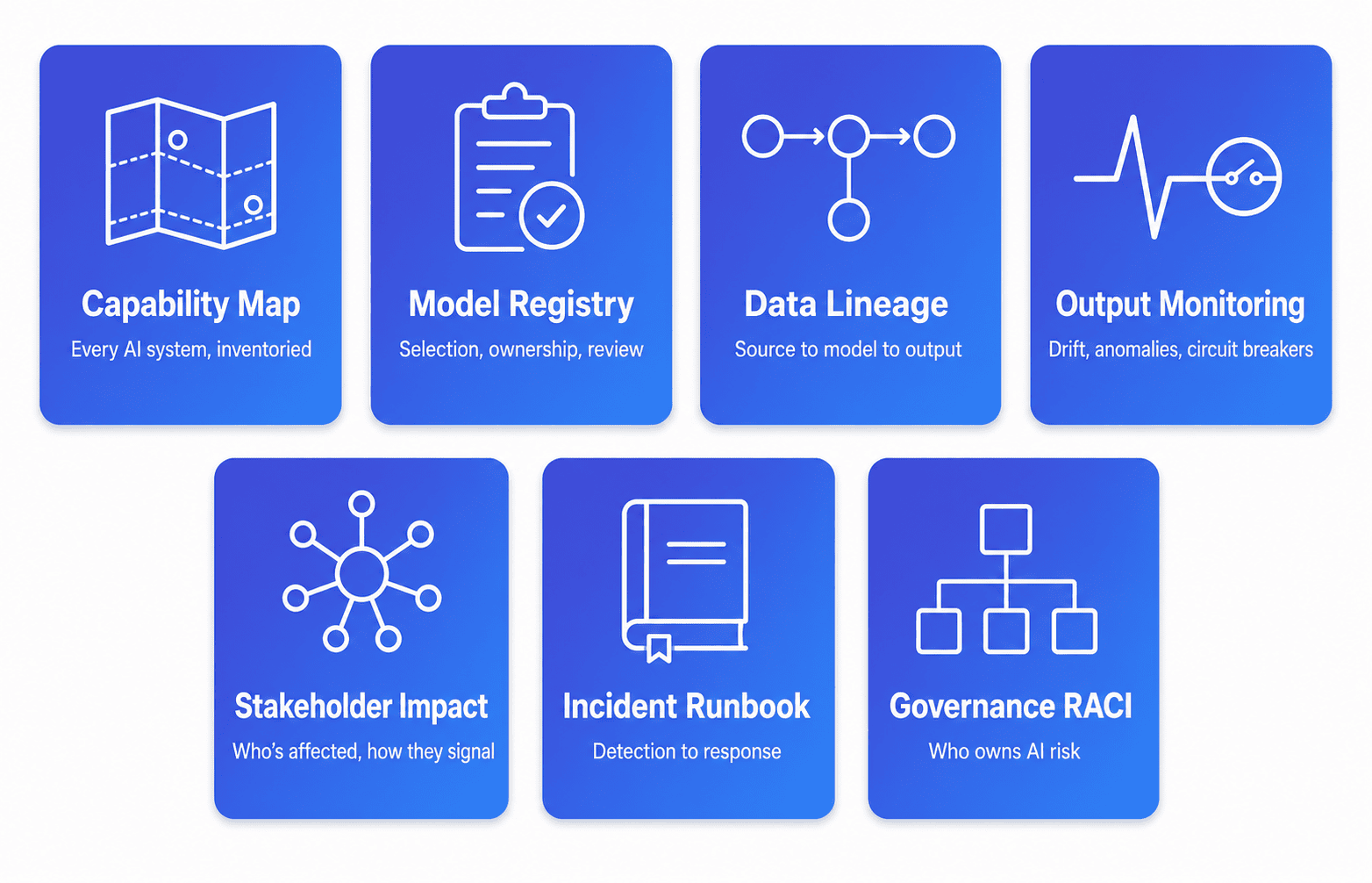

What does the AI governance framework actually contain?

A complete AI governance framework — the kind we deliver at the close of an enterprise-readiness engagement — contains seven artifacts. None of them is novel in isolation. Most of them have analogues in other compliance domains. The value is in having all seven, integrated, current, and owned by named people inside the organization.

1. Capability Map. The deliverable from the posture assessment in Part 1. Lists every AI system in the organization — the deliberate ones, the embedded third-party ones, the experimental internal tools. For each system: where it runs, what it does, who built it, who maintains it now. Answers: what AI is in your product?

2. Model Registry. The living document of every model in production. For each model: selection rationale, evaluation baseline, last review date, retraining cadence, named owner. Answers: how do you choose and govern models? This is also the artifact most procurement teams ask to see first, because it is the cleanest signal that the organization treats model selection as a governed decision rather than an engineering convenience.

3. Data Classification and Lineage Diagram. Every AI system’s inputs mapped to a sensitivity tier — public, internal, customer, regulated — with a visual lineage from source through classification gate through model to output. Answers: what data feeds your AI, and how is it controlled? For clients selling into healthcare or financial services, this artifact is non-negotiable. We have seen procurement teams reject vendors over the absence of this single document.

4. Output Monitoring and Circuit Breaker Specification. For each AI output: the monitoring approach, the drift detection method, the anomaly thresholds, and — for outputs that trigger automated actions — the circuit breaker conditions under which the automation pauses for human review. Answers: how do you know your AI is behaving correctly, and what stops it when it is not?

5. Stakeholder Impact Map. A hub-and-spoke diagram for each AI system showing internal and external stakeholders, the nature of impact for each group, and the feedback channel through which each group can signal that something is not working. Answers: who is affected by this AI, and how do they tell you?

6. AI Incident Response Runbook. The procedure for when an AI system behaves unexpectedly: detection, escalation, decision authority, internal communication, external communication. Tied directly to the stakeholder impact map. Answers: what happens when something breaks?

7. Governance Ownership Chart (RACI). A single page identifying who is Responsible, Accountable, Consulted, and Informed for each governance dimension across every AI system. Names. Titles. Not committees. Answers: who owns AI risk?

The reason this configuration works — and the reason we converged on these seven specifically — is that they collectively answer almost every recurring question we have seen come back from enterprise procurement teams over the years we have been doing this work. New questions still appear. The framework is a living thing. But the seven-artifact spine has held up across healthcare, financial services, and crypto/DeFi engagements, and it is the spine we recommend.

The single schematic that ties it together

One of the things we have learned doing AI vendor assessment work from both sides — building frameworks for clients, and watching enterprise risk teams evaluate them — is that procurement reviewers do not have time to assemble a mental picture from seven separate documents.

So we always build an eighth artifact, which is technically not part of the pack: a single-page schematic that shows how all seven documents relate to each other. The capability map at the top. The four measurement-layer dimensions feeding into it. The incident runbook and ownership chart wrapping around the outside as the operational layer. One page. Readable in ninety seconds.

The schematic does not replace any of the underlying documents. It is a navigation aid. But it is often the first thing the buyer’s team looks at, and it sets the frame for how they read everything else. Worth the design time.

What is the Stand-Prove-Procure Method?

The seven-document pack is what we deliver. But every client’s pack is different in detail — different AI systems, different regulatory exposure, different organizational shape. The transferable thing is not the pack. It is the method we use to develop one.

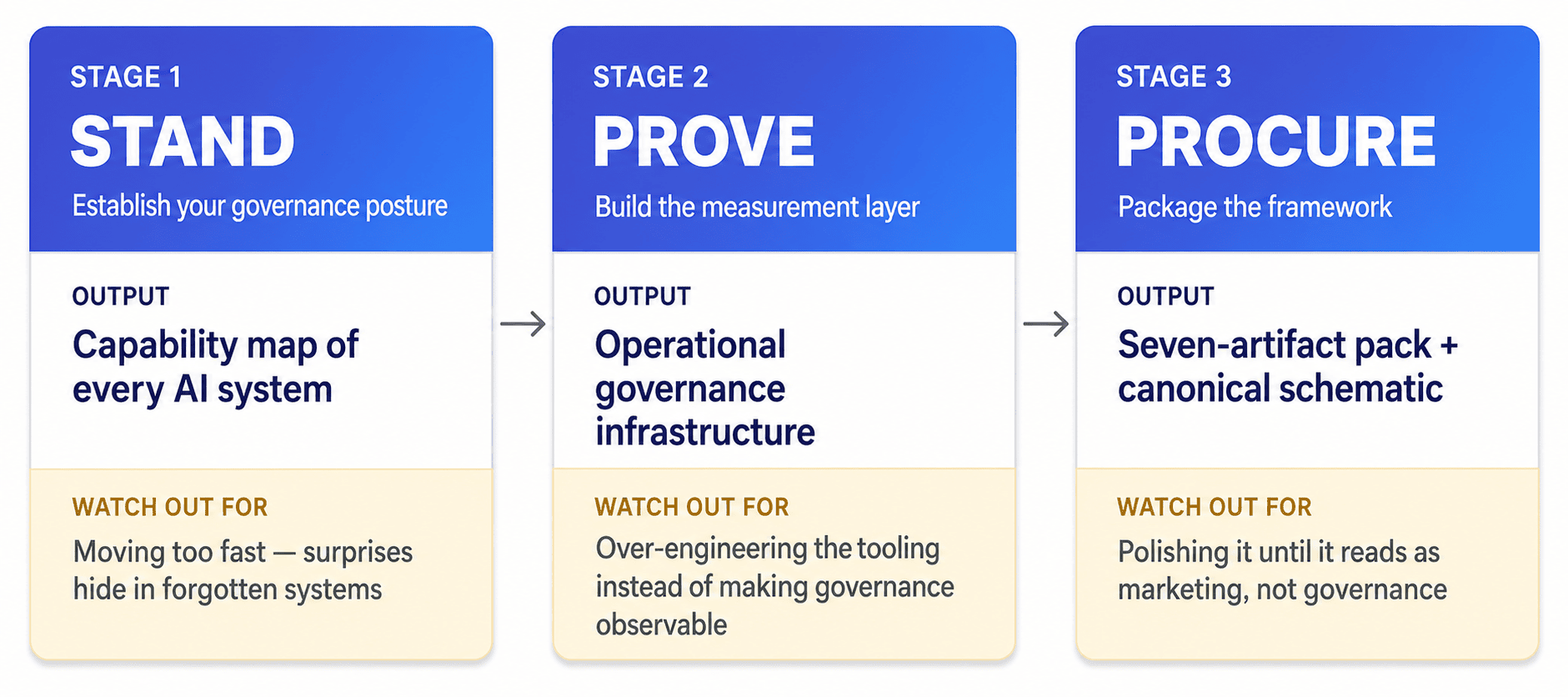

We call it the Stand-Prove-Procure Method. Three sequential stages. Each stage has a specific output, and the outputs are sequential in a way the work itself is not — you cannot package what you have not proven, and you cannot prove what you have not mapped.

Stage 1 — Stand: Establish your governance posture. Run the five-dimension posture assessment from Part 1. Inventory what is running. Document how each model was chosen, what data goes in, what comes out and where it goes, who is affected. The output is a capability map. The temptation in this stage is to move fast — most of the inventory feels obvious. Resist it. The surprises in AI governance almost always come from things the team forgot it shipped: the internal tool spun up on someone’s API key, the third-party vendor that quietly added AI to its product, the experimental feature that never got formally reviewed. Going slow in Stand is what lets the rest of the method work.

Stage 2 — Prove: Build the measurement layer. Instrument the four components from Part 2: model registry, data classification, output monitoring, stakeholder impact map. The output is operational governance infrastructure — not a document, but a system that runs alongside the product. The temptation here is to build elaborate tooling. Resist that too. The point of the measurement layer is to make governance observable, not to make it impressive. Several of the highest-functioning model registries we have built for clients are spreadsheets. Several of the cleanest data lineage diagrams are static images updated quarterly. Procurement teams trust artifacts that look maintained, not artifacts that look engineered.

Stage 3 — Procure: Package the framework. Assemble the seven-artifact pack. Build the canonical schematic. Map each procurement question your buyers actually ask to the artifact that answers it. The output is a story you can walk into a sales conversation with — and one a risk reviewer can evaluate without you in the room. The temptation in this stage is to over-design. To make it look polished. Resist a third time. A framework that looks like it was made for a pitch reads to procurement teams as marketing. A framework that looks like a working document reads as governance.

The three stages are not strictly sequential in execution — Stand and Prove tend to overlap, and we have done partial Procure work in parallel with late-stage Prove on several engagements. But the outputs are sequential, and the discipline of producing them in order is what keeps the framework honest. Skip Stand and your measurement layer will be aimed at the wrong systems. Skip Prove and your artifact pack will be theatrical. Get the order right and the method scales — to new AI capabilities, to new regulatory environments, to new clients.

The other thing the method does, which we did not fully appreciate until we had run it with several clients, is build the muscle. By the time a team has completed Stand-Prove-Procure once, it has a repeatable pattern for every new AI capability it ships. The next model gets a registry entry by default. The next data source gets classified before it is plumbed in. The next output gets a monitoring plan as part of its definition of done. Governance stops being a project. It becomes how AI capabilities get built.

What did the procurement conversation actually look like?

When we were in the kickoff call with this client three months earlier, the buyer’s questions sounded daunting. By the time the framework was assembled, they were straightforward. Here is how four of the hardest ones got answered, directly from the artifacts.

“How do you monitor model performance over time?” We pulled up the model registry. Every model had a documented evaluation baseline, a defined drift detection approach, and a review cadence. The output monitoring spec described what was tracked per system and what triggered an alert. The buyer’s data science lead asked a single follow-up about retraining frequency and got a one-line answer.

“What is your data lineage story?” The data classification and lineage diagram was a one-page artifact showing each AI system’s inputs traced from source to model to output. Each input was tagged with its sensitivity tier. The buyer’s compliance lead asked one clarifying question about a third-party data source and got the lineage trail for it on the same page.

“Who owns AI risk in your organization?” The governance ownership chart named a single accountable executive, plus the responsible engineering and product owners for each AI system. Not a committee. Not “the leadership team.” A person. With a name and a title. The buyer’s procurement lead noted it and moved on.

“What happens if your AI does something unexpected?” The incident response runbook walked through detection, escalation, communication, and decision authority. The stakeholder impact map showed which downstream parties get notified for each system. The circuit breaker spec showed which automated outputs pause under defined conditions. Three minutes. Three artifacts. Done.

None of these answers were rehearsed. They came directly from the documents. That is the test of a real AI governance framework: whether the answers fall out of the artifacts naturally, or whether the team has to translate. If you find yourself rehearsing, the artifacts are not finished.

What changed after the framework was in place

Within ten weeks of starting, the client moved from stalled procurement to closing his first enterprise contract. The procurement cycle on subsequent enterprise deals shortened materially — security and compliance reviews that had been taking months were getting through in weeks.

More importantly, his engineering team was now shipping AI capabilities with governance built in by default. Six months in, when we checked back, he was not running governance as a workstream anymore. The model registry was being updated by whichever engineer shipped a new model. The data classification diagram was getting amended as part of every new integration’s pull request. The framework had become the way the team operated, not a separate exercise the team had to remember to do.

That is the outcome we work toward. The deal closing is the surface result. The durable result is governance becoming invisible — woven into how the team ships, rather than something that gets reassembled every time procurement asks.

The deal closing is the surface result. The durable result is governance becoming invisible — woven into how the team ships, rather than something that gets reassembled every time procurement asks.

Five things a CTO can do this quarter

Most readers of this series are not going to hire us, and that is fine. The method is more useful when the people who need it can run it themselves. Here is what we tell CTOs who recognize their own position in this story and want to start without an engagement.

1. Run a self-assessment against the five posture dimensions before procurement asks. Spend a day. Not a quarter — a day. Get your engineering leads in a room and answer the five questions from Part 1. What AI is running and where, how each model was selected, what data goes in, what comes out, who is affected. The answers will be incomplete. That is the point. Knowing what you do not know is the start of governance.

2. Stand up a model registry, even if it is a single Notion page. The bar is low. List every model in production. For each one, capture three things: who chose it and why, when it was last reviewed, who owns it. The discipline of having the page matters more than the format. You can iterate the format later. You cannot iterate having an inventory you never built.

3. Pick one AI system and instrument its data lineage end-to-end. Do not try to do all of them at once. Pick the system with the most regulated data and trace every input from source to model to output. Document it as a diagram. Use it as the template for the rest. Doing this once, properly, is more valuable than doing all of them halfway. It also surfaces every governance question your team has been deferring, which is the second-order benefit.

4. Define your human-versus-automated output boundary in writing. For every AI system, answer one question: does the output feed a human decision, or does it trigger an automated action? If it triggers an action, what is the circuit breaker? Most teams have an intuitive answer. Few have a documented one. The gap between the two is exactly where procurement teams get nervous, and exactly where AI risk ownership gets ambiguous when something goes wrong.

5. Name a single owner for AI risk before someone external asks. Not a committee. Not “the leadership team.” A person. The act of naming an owner forces the conversation about what AI risk actually means in your organization, which is the conversation governance was always supposed to start. Do it before you are asked. We are working on it is a fine answer internally. It is not a fine answer in procurement.

If a team does these five things in the next ninety days, it will not have a complete AI governance framework. But it will have the spine of one — and it will be ahead of most companies its size moving into enterprise AI procurement.

Governance as a practice, not an event

The shift this series has been describing — posture, to proof, to procurement-ready framework — is fundamentally a shift from governance as an event to governance as a practice. Companies that get there do not rerun the project every time enterprise procurement asks. They have built the muscle. New AI capability gets a registry entry, a classification, a monitoring plan, an owner. Not because anyone reminded them. Because that is how the team works now.

That is what makes the framework durable. And it is what makes the difference, in 2026 and beyond, between SaaS companies that can sell into regulated enterprise markets and the ones that cannot.

The AI is the easy part. The governance is what scales it.

Segev Shmueli is the founder of ProductiveHub, providing fractional CTO and AI leadership services for startups and growing companies, including in regulated industries like healthcare, financial services, and crypto/DeFi.