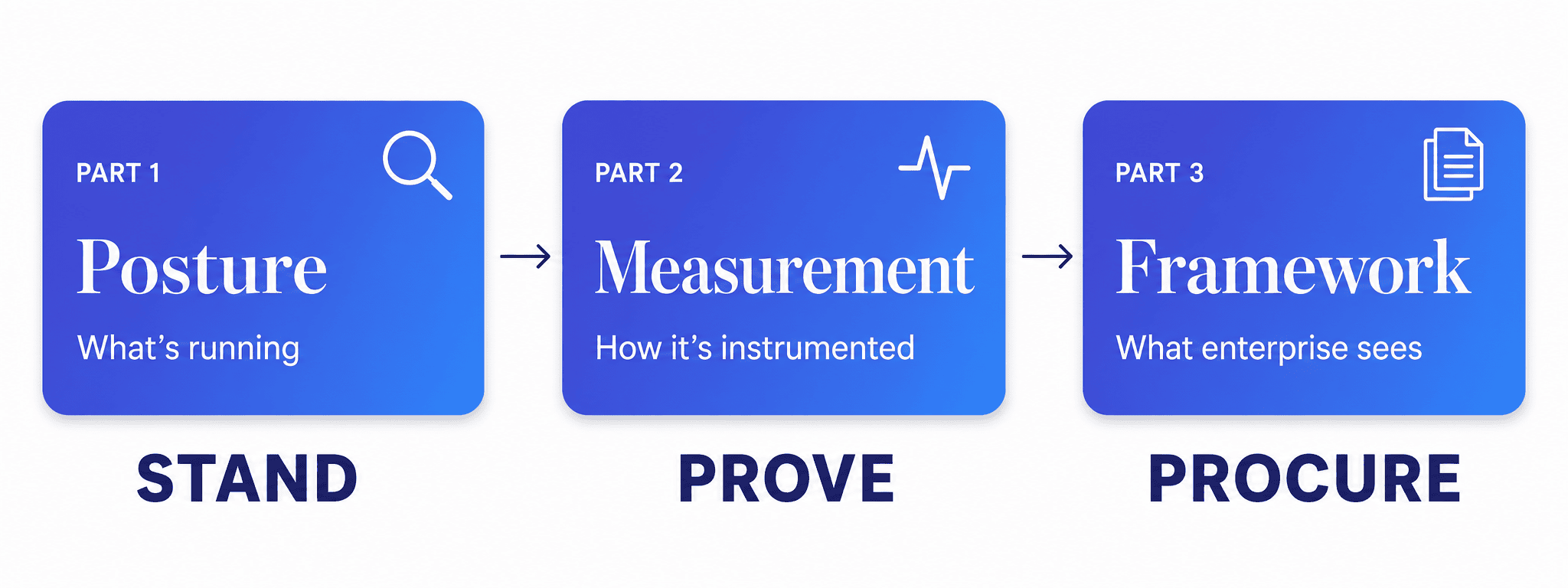

This is the second in a three-part series on how we approach AI governance with clients moving into enterprise markets. Part 1 covered the posture assessment. Part 3 will present the full framework.

By the time we finished the posture assessment with our client, we had a clear picture of what was running. Three AI-powered features in production, two third-party integrations with AI components embedded inside them, and one internal tooling system the engineering team had built for their own use. All of it working. None of it documented in a way that would survive an enterprise procurement review.

That’s not unusual. It’s actually a reasonably healthy starting point — the AI is real, it’s delivering value, and the team that built it understands it well. The work now is making that understanding transferable. Turning what lives in the team’s heads into something visible, measurable, and demonstrable to an outside audience. This is where AI governance measurement becomes the actual work — and where the engagement shifts from mapping to building.

This is the part of an engagement where governance stops being a concept and starts being infrastructure.

Why Policies Without Instrumentation Don’t Hold Up in Enterprise Procurement

Most governance efforts start with a policy document. Here are our principles. Here’s our commitment to responsible AI. Here’s the committee we’ve formed to oversee it.

We don’t start there. Policies without instrumentation are just intentions — and enterprise procurement teams have seen enough intentions to know the difference. What they want to see is evidence: that you know how your models are behaving, that you know what data is moving through them, that you have someone accountable when something changes.

Enterprise AI instrumentation is the bridge between saying you have governance and being able to show it. You can only produce that evidence if you’ve built the measurement layer first. So that’s where we go.

What Does an AI Measurement Layer Actually Include?

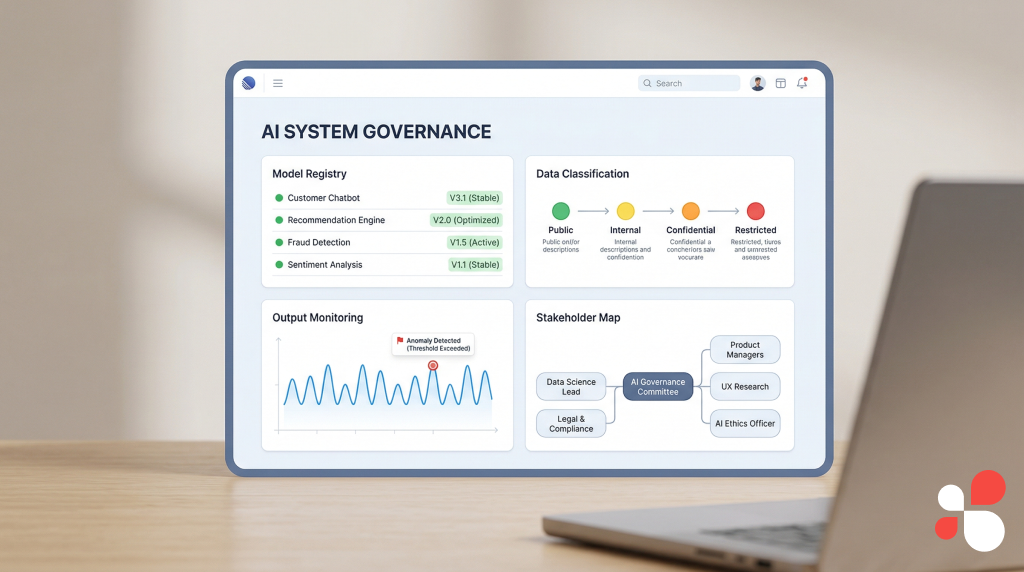

An AI measurement layer includes four instrumented components: a model registry capturing selection decisions, a data classification system tracking what feeds your AI, an output monitoring setup tracking what it produces and where it goes, and a stakeholder impact map documenting who is affected. Together these form an operational governance system — not a document, but infrastructure that runs alongside your product.

The posture assessment mapped five dimensions of the client’s AI footprint. Instrumentation builds on four of them: model selection, data in, data out, and stakeholder impact. Each one gets its own measurement approach, and together they form the foundation of a governance system that’s operational rather than theoretical.

Model Selection — Building a Model Registry

What is a model registry and why does it matter for AI governance? A model registry is a living document that captures what models are running in production, why each was chosen, when each was last reviewed, and who owns it. It gives enterprise buyers a concrete artifact to evaluate rather than a verbal assurance.

The first thing we establish is a documented decision framework for model selection — not just for the models currently running, but for every model choice going forward. This matters because enterprise clients will ask not just what model you’re using but why, and what criteria governed that choice.

The framework we built for this client covered four criteria: capability fit (does the model perform reliably on the specific task), data residency (where does the model process data, and does that meet the client’s requirements), vendor stability (is this a model backed by a provider with enterprise-grade SLAs and a compliance posture the client can reference), and cost at scale (do the unit economics hold as usage grows).

Every current model in production got documented against these criteria retrospectively. Every future model selection goes through the framework before anything ships. The output is the AI model registry — a living document the client’s team can maintain independently, that they can open in a procurement conversation and point to directly.

Data In — Classifying What Feeds Your AI Systems

How do you classify data inputs feeding an AI system? We use a four-tier classification: public data with no sensitivity constraints, internal operational data, customer data with standard privacy implications, and regulated data — anything covered by HIPAA, SOX, GDPR, or equivalent frameworks depending on the markets the client serves.

This is where the instrumentation gets most specific — and where clients with data sensitivity concerns pay the closest attention. Every AI system consumes data. The governance question is whether you can account for all of it.

Data classification for AI isn’t just about labeling data. It’s about tracing lineage: where the data originates, how it moves into the model, and what controls exist at each step.

For each AI system in production, we map every data input to its classification tier and document the lineage. For this client, that exercise surfaced one integration where customer data was passing through a third-party AI component without a clear lineage trail. Not a risk that had materialized — but one that needed documentation and a contractual review before it could be presented to an enterprise client with confidence.

That’s exactly the kind of thing the measurement layer is designed to catch — and to be able to show that you caught it, addressed it, and have controls in place going forward.

Data Out — Monitoring AI Outputs and Defining Human Oversight

How do you monitor AI outputs in an enterprise environment? We distinguish between outputs that feed human decisions and outputs that feed automated actions. Both require documentation and transparency. Automated outputs additionally require circuit breakers — defined conditions under which the automation pauses and a human gets involved. Monitoring covers output consistency, drift detection, and anomaly alerting across all AI systems.

Outputs are where AI systems meet the real world — and where the governance implications become most concrete. We instrument three things on the output side: what the system produces, where it goes, and what acts on it.

That last distinction matters most in practice. An AI output that feeds a human decision is governed differently than one that feeds an automated action. The former requires documentation and transparency. The latter requires all of that plus circuit breakers.

For each AI system, we define the output type, the downstream destination, the human oversight model, and the monitoring approach. AI output monitoring covers three things: output consistency (is the model behaving the way it was when it was evaluated), drift detection (are outputs shifting in ways that suggest the model’s behavior is changing), and anomaly alerting (are there outputs that fall outside expected parameters and need human review).

By the end of this exercise, every AI output in the client’s system has a documented trail from production to destination, with defined oversight at each step.

Stakeholder Impact — Mapping Who Your AI Affects

Who does your AI affect, and how do they report problems? A stakeholder impact map documents every group — internal teams, customers, end users, downstream populations — that is shaped by AI outputs, along with the feedback channel each group uses to signal when something isn’t working as expected.

The final instrumentation dimension is the one most companies skip — and the one enterprise procurement teams find most revealing. It’s not enough to know what your AI does. You need to know who it affects, and in what way.

We build a stakeholder impact map for each AI system. Internal stakeholders — the teams who use AI outputs to do their jobs — get documented alongside external ones: customers, end users, and in the case of this client’s healthcare vertical, patients and clinicians downstream of the workflows their product supports.

For each stakeholder group, we document the nature of the impact — what decisions or experiences are shaped by AI outputs — and the feedback channel. How does a stakeholder signal that something isn’t working as expected? Who receives that signal, and what happens next?

This becomes the foundation of the client’s AI incident response process, which we establish as part of the same workstream. It’s not a separate document. It grows directly from the stakeholder map, because the map already defines who’s affected and how.

What Does an Operational AI Governance System Actually Look Like?

What does enterprise AI governance look like when it’s actually operational? It looks like observable infrastructure — a model registry with documented selection rationale and review cadences, a data classification layer with lineage trails across every input, an output monitoring setup with drift detection and anomaly alerting, and a stakeholder impact map with defined feedback channels. Not a policy document. Each piece is visible, auditable, and maintainable by the client’s own team.

Not “we take AI governance seriously” — every vendor says that. But “here’s our model selection criteria, here’s how we classify and trace data, here’s how we monitor outputs, here’s who’s accountable when something changes.” That’s a different conversation to walk into.

By the time we’ve instrumented all four dimensions, the client has something they didn’t have at the start of the engagement: a governance system that’s actually running.

That last point matters more than it might seem. Governance infrastructure that only works while we’re in the room isn’t governance — it’s consulting theater. Everything we build is designed to be owned and operated independently after we leave.

Three weeks into the instrumentation workstream, the client had a governance system running across their current projects and a framework ready to apply to every new AI capability they ship going forward. The model registry, the data classification layer, the output monitoring setup, the stakeholder maps — all of it documented, all of it operational.

What This Gives You in an Enterprise Sales Conversation

More than the infrastructure itself, what the measurement layer produces is a story you can actually tell — and one enterprise procurement teams can evaluate.

Enterprise procurement teams are evaluating risk as much as capability. When they ask governance questions, they’re looking for evidence that your organization understands its own systems — not just that the product works, but that you know how it works, what data moves through it, what happens when something behaves unexpectedly, and who owns it. The measurement layer makes all of that answerable.

There’s also a practical benefit inside the organization. Once the instrumentation is in place, the client’s team has a repeatable process for every AI capability they build going forward. New feature coming? Run it through the model selection framework. New data source feeding into an AI system? Classify it and document the lineage. New output going somewhere unexpected? Define the oversight model before it ships. The governance muscle gets built into how the team works, not maintained as a separate compliance exercise.

That shift — from governance as an event to governance as a practice — is what makes the system durable. It doesn’t depend on anyone remembering to do it. It’s just how AI capabilities get shipped.

Up Next: The Full Framework

Part 3 assembles everything into the complete picture — the posture assessment from Part 1, the measurement layer from Part 2, and the documentation, flowcharts, and schematics that pull it together into what an enterprise client would actually evaluate in a procurement conversation.

If you’re working through this with your own team, the measurement layer is where the work starts to feel real. The assessment tells you where you are. The instrumentation gives you something to show. Part 3 is about how you present it.

Segev Shmueli is the founder of ProductiveHub, providing fractional CTO and AI leadership services for startups and growing companies, including in regulated industries.