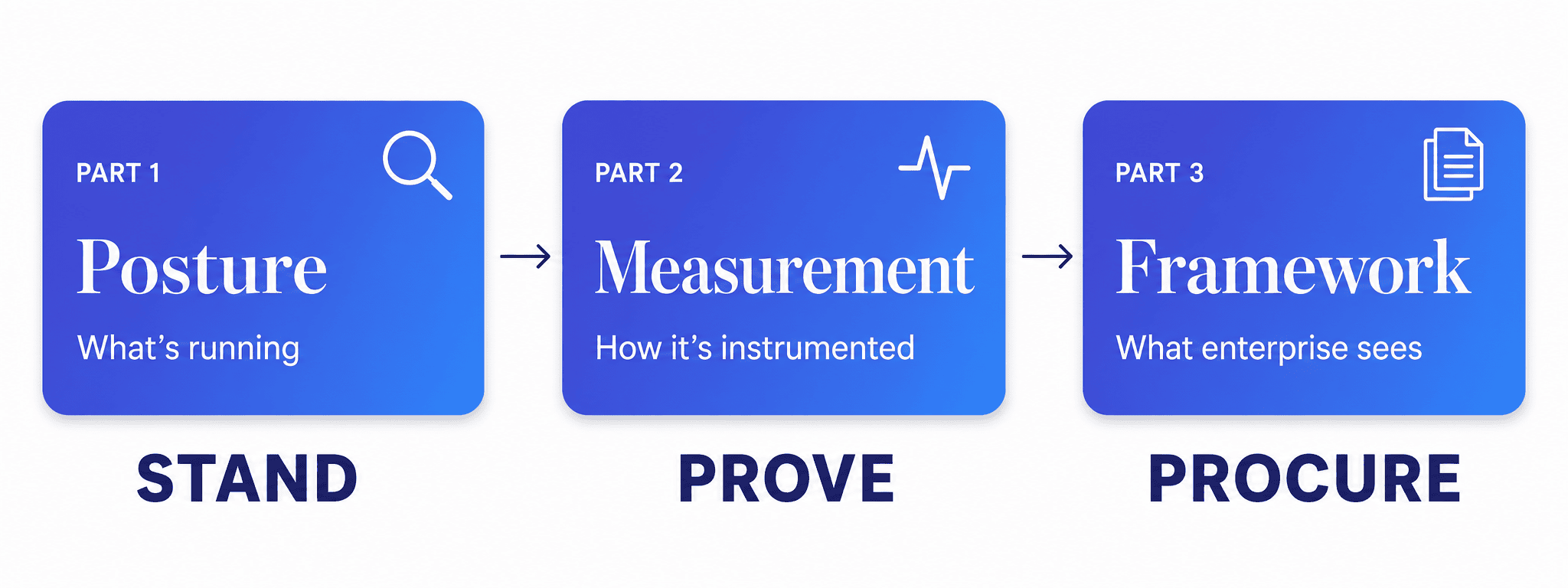

This is the first in a three-part series on how we approach AI governance with clients moving into enterprise markets. Part 2 covers building the measurement layer. Part 3 presents the full framework.

We got a call from a CTO at a SaaS company that had been quietly winning in the mid-market. Good product, good team, real traction in healthcare and finance. AI features shipping, customers happy, nothing broken.

Then he started talking to enterprise clients.

The questions were different at that level. Not ‘does it work?’ — his product clearly worked. But ‘how do you monitor model performance over time?’ and ‘what’s your data lineage story?’ and ‘who owns AI risk in your organization?’ Questions he hadn’t needed to answer before, because at the scale he’d been operating, trust was built through relationships and results.

Enterprise procurement doesn’t work that way. It runs on documentation, accountability, and demonstrable process. And he was honest with himself: he had a strong product and a team that had built things that worked — but the governance infrastructure to back it up at that level? That was a different conversation. One he hadn’t had yet.

So he called us.

The first thing we told him was the same thing we tell every client in this position: before we build anything, we need to see what’s already there. Not what the architecture diagram says is running, but what’s genuinely in production, touching data, and affecting users. You can’t govern what you haven’t mapped — and in our experience, those two things are further apart than most teams expect.

So we started with an assessment.

Why does AI governance start with visibility?

AI governance must start with visibility — you can’t govern what you haven’t mapped. Most SaaS companies in this position have the same experience. AI features get built because they solve real problems and ship fast. Governance doesn’t keep pace — not because anyone made a bad decision, but because at mid-market scale it was never the thing standing between them and the next deal. Until it was.

Walking in prepared means closing that gap before the conversation happens. That starts with an honest picture of where you actually stand.

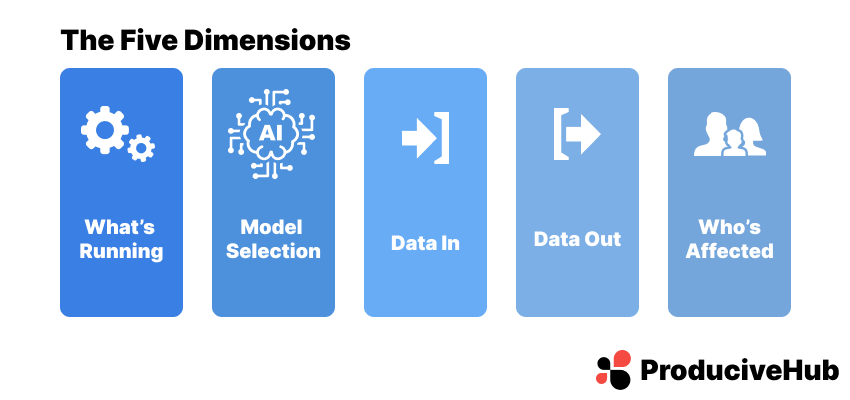

What does an AI governance posture assessment cover?

The posture assessment covers five dimensions. They sound straightforward. Getting complete answers to all five is where the work is.

1. What AI is running, and where

This is the inventory question — and the answer is almost always more interesting than the initial response. The first pass typically surfaces the obvious: the AI features in the product, the models powering them, the integrations the engineering team built intentionally. The second pass surfaces everything else. The internal tool someone spun up using an API key. The third-party vendor that quietly added AI capabilities to a product you’ve been using for three years. The experimental feature that shipped quietly and never got formally reviewed.

We’re not looking for trouble here. We’re building a complete picture. You can only govern what you can see.

2. How models were selected

Every AI system rests on a model choice. What we want to understand is whether that choice was made deliberately, against defined criteria, or whether it was made by whoever was building at the time based on what was available and familiar. Both happen. Neither is a problem in itself — but they have different implications for governance. A deliberate selection process produces documentation, rationale, and a clear owner. An informal one produces a system that works but can’t be easily explained to a procurement team asking why you chose it.

3. What data is going in

This is where regulated-industry clients always lean forward. What data is entering your models? Where does it come from? What’s the sensitivity classification? Is there a lineage trail? For a company selling into healthcare or finance, the answer to these questions will be scrutinized. We map every data input across every AI system — not just the ones the engineering team considers significant, but all of them.

4. What’s coming out, and where it goes

Outputs are half the equation that most assessments underweight. We look at what each system produces, where those outputs travel, and what decisions or actions they inform downstream. An AI system generating a summary that a human reviews before any action is taken sits in a very different risk category than one whose output feeds directly into an automated workflow. Enterprise procurement teams understand this distinction. You should be able to explain it clearly for every AI system you run.

5. Who is affected

The stakeholder map — who inside the organization interacts with or depends on AI outputs, and who outside it (customers, end users, regulated populations) is affected by them. This dimension matters because enterprise clients aren’t just evaluating your technical posture. They’re evaluating your understanding of your own system’s reach. If you can articulate not just what your AI does but who it touches and how, that’s a meaningful signal of maturity.

How do you run an AI governance assessment?

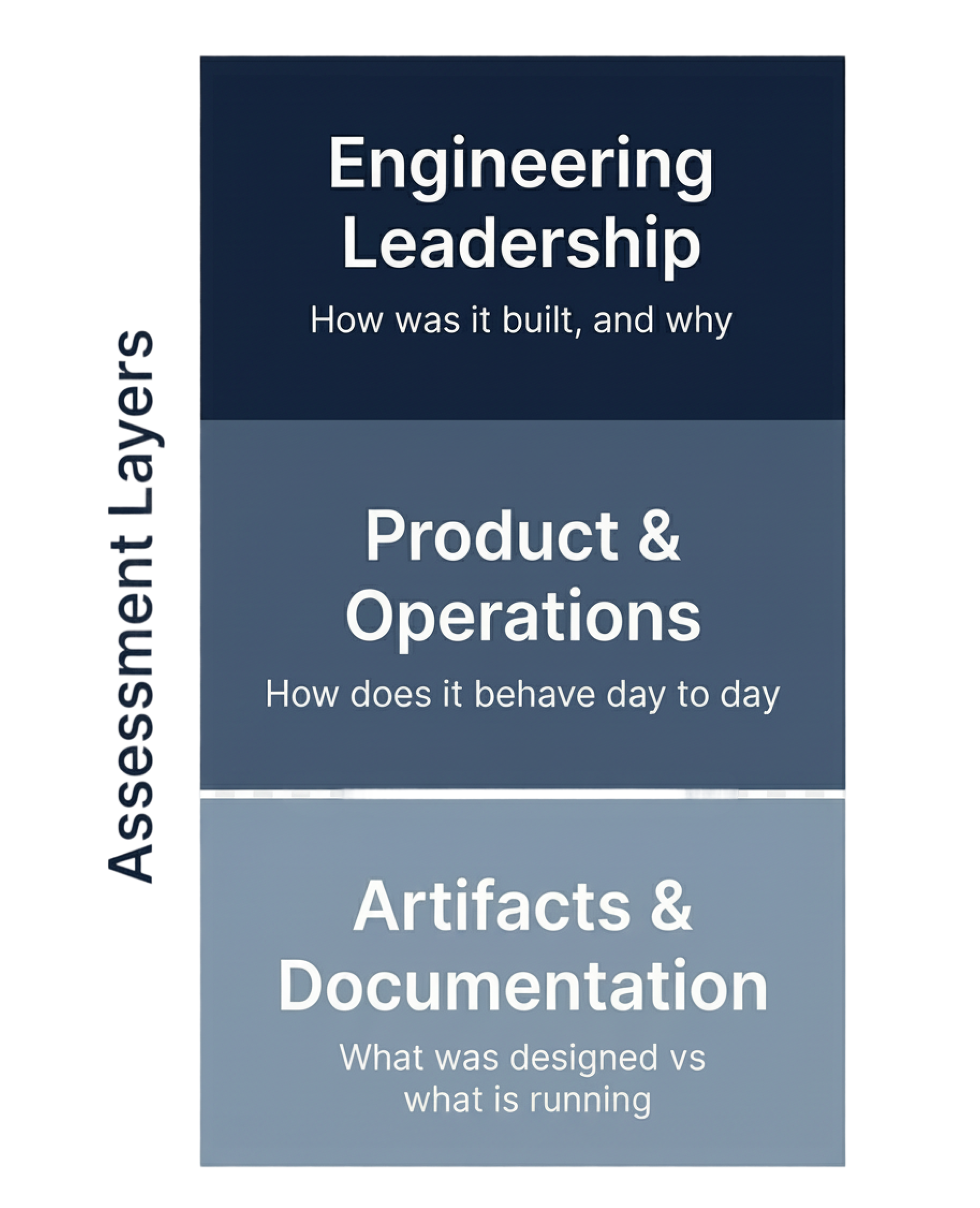

The assessment isn’t a form. It’s a structured conversation across three levels of the organization.

We start with engineering leadership — not to audit, but to understand. How was the system built? What decisions were made and why? What does the team already know about the AI footprint? Good engineering teams have good institutional knowledge. We want to draw it out, not interrogate it.

Then we move to product and operations — the people who live with the AI outputs day to day. What’s working well? What do they wish they understood better about how the system behaves? This is often where the most useful signal lives, because product and ops teams develop an intuitive sense of where the edges are, even when they don’t have formal frameworks to describe them.

Finally, we look at the artifacts — architecture documentation, model cards where they exist, data flow diagrams, third-party vendor agreements, anything that captures how the system was designed and how it’s expected to behave. We’re not grading the documentation. We’re using it to triangulate against what we heard in conversations.

The whole process typically takes one to two weeks for a company of this size. At the end, we have a complete picture.

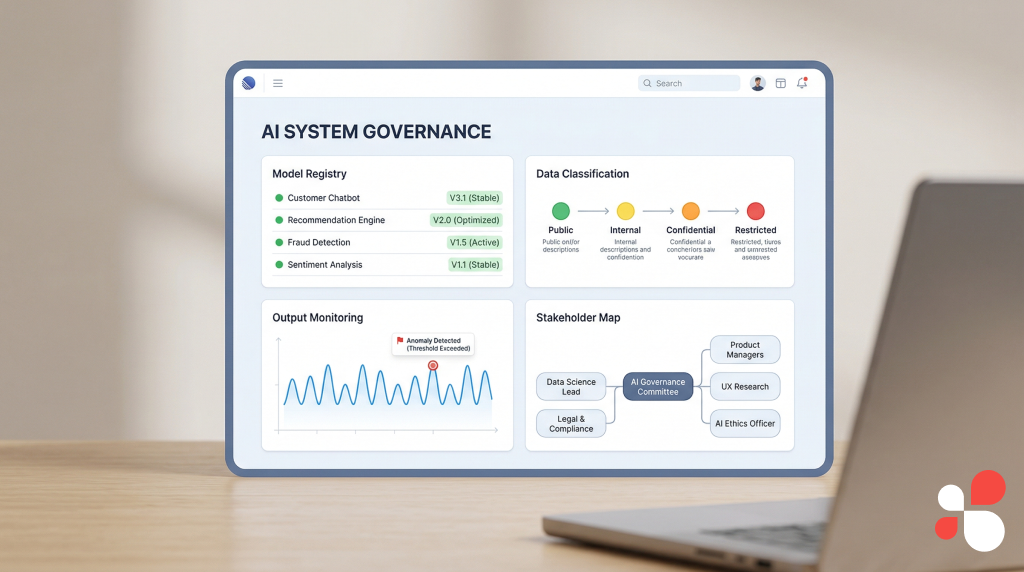

What does an AI governance assessment deliver?

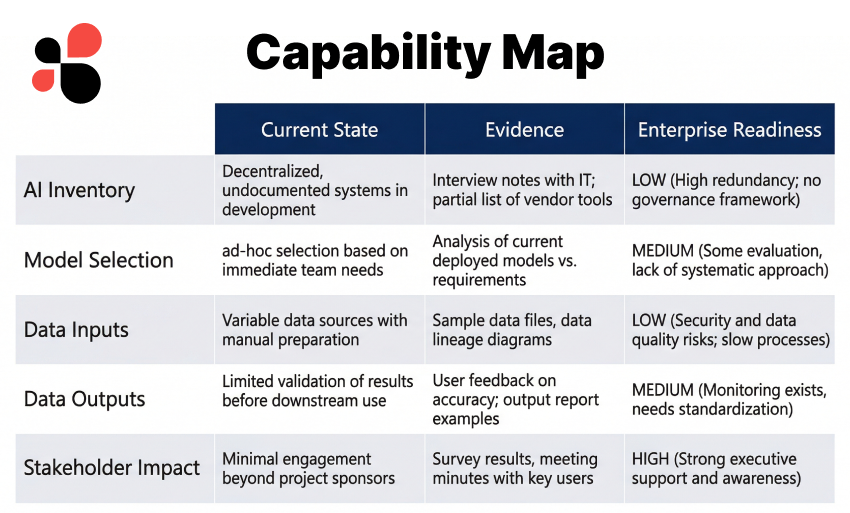

The deliverable from a posture assessment isn’t a gap analysis. It’s a capability map.

We document what’s running across each of the five dimensions, describe how it reads from an enterprise procurement perspective, and identify where additional documentation or instrumentation would strengthen the story. Every finding is framed in terms of what’s already working and what would make it clearer to an external audience.

The goal is a document the CTO can use in two ways: internally, as the foundation for the governance work we’re about to do together, and externally, as the basis for confident answers when enterprise procurement starts asking questions.

By the time the assessment is complete, the client knows exactly what they have. That’s not a small thing. As AI moves from experimentation to deployment, governance is the difference between scaling successfully and stalling out. Scaling successfully starts with knowing what you’re scaling.

What comes after the posture assessment?

With the posture assessment in hand, we move into the part of the engagement where the real infrastructure gets built. Part 2 covers how we establish the measurement layer — risk scoring by use case, data governance at every stage of the AI pipeline, and the instrumentation that turns a governance posture into something demonstrable.

Because a procurement team doesn’t just want to know that you take governance seriously. They want to see it.

Segev Shmueli is the founder of ProductiveHub and a Fractional CTO specializing in production AI systems for enterprise clients in healthcare, finance, and regulated industries.